Apple's patent applications always provide an interesting peek into what Apple researchers have been working on. In one of the more interesting patent applications we've discovered, Apple appears to be researching 3D displays in which the user will be able to look around an object.

In order to view a 3D object from various angles on your screen at present, you are required to use the mouse or keyboard to manipulate the object. This might simply involve clicking and dragging to pan or rotate an object. While functional, Apple considers this to be unintuitive and potentially frustrating to new users.

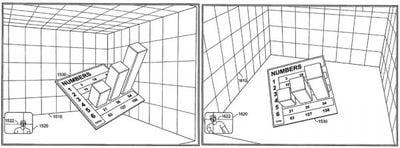

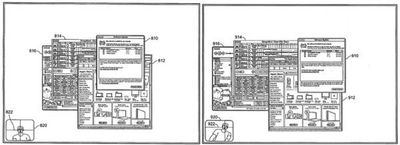

Apple proposes that a display could change the perspective of the 3D objects based on the users' relative position. Said display would detect the user's position through any suitable approach (such as video, infrared, electromagnetic fields etc...). Video, of course, is the most likely possibility with a camera mounted at the top of the display itself, thus allowing the computer to determine the user's location and position. The user could then move their head left and right to look around a 3D object as shown in the example image above. Apple also suggests that it could also be applied to 2D objects like windows to provide some added depth to traditionally flat objects:

In fact, Apple suggests that software could be so advanced as to incorporate elements of the user's environments into the scene on the display.

For example, the electronic device may define visual properties of different surfaces of the displayed object (e.g., reflection and refraction characteristics), and apply the visual properties to the portions of the detected image mapped on each surface. Using this approach, surfaces with low reflectivity (e.g., plastic surfaces) may not reflect the environment, but may reflect light, while surfaces with high reflectivity (e.g., polished metal or chrome) may reflect both the environment (e.g., the user's face as detected by the camera) and light. To further enhance the user's experience, the detected environment may be reflected differently along curved surfaces of a displayed object (e.g., as if the user were actually moving around the displayed object and seeing his reflection based on his position and the portion of the object reflecting the image).

Apple has been researching these sorts of novel display types for years. Back in 1995, they had even had a similar system built in the lab and referred to these displays as "Hyper Reality" displays. Apple, of course, is not the only company working on such technology. This YouTube video shows this system in action (thanks djellison) on a make-shift Wii system, demoed by Johnny Lee: