Twitter today announced a list of new features for the web and mobile that plan to leverage the company's learning technology to drastically reduce harassment on the service.

Twitter today announced a list of new features for the web and mobile that plan to leverage the company's learning technology to drastically reduce harassment on the service.

The updates will place more tools in the hands of the users to "control your experience," and overall Twitter said it intends to be more vocal and "communicate more clearly" about the actions it takes in the realm of online safety.

The company's first step is an overall boost to its learning algorithms that it says can detect when accounts are violating the Twitter Rules repeatedly, or otherwise engaging in abusive behavior, and take actions prior to even getting a digital abuse report from other Twitter users. Abusive accounts will then face repercussions, "such as allowing only their followers to see their Tweets."

Our platform supports the freedom to share any viewpoint, but if an account continues to repeatedly violate the Twitter Rules, we will consider taking further action.

We aim to only act on accounts when we’re confident, based on our algorithms, that their behavior is abusive. Since these tools are new we will sometimes make mistakes, but know that we are actively working to improve and iterate on them everyday.

In terms of tools, users will now have even more filtering options for notifications so that basic "egg" accounts with a profile photo, and those with an unverified email address or phone number, can all be filtered out completely. Expanding on the mute feature implemented last November, users will be able to more easily gain access to mute words or entire conversations right from the Twitter timeline. A length of time can now be selected as well, so content can be muted for one day, one week, one month, or indefinitely.

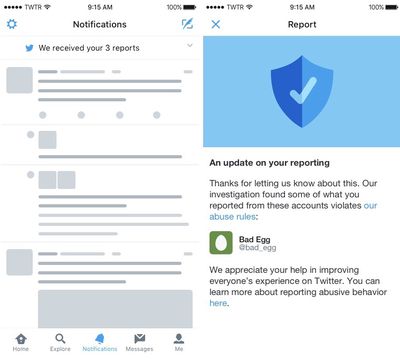

After negative press surrounded Twitter for its inaction towards anti-harassment measures on the site, the company noted in its blog post that a big point moving forward will be "continuing to improve the transparency and openness of our reporting process." So on a big scale the company plans to be more consistent with its safety feature rollouts, and on a small scale it said that users will be notified more often about Tweets and accounts they've reported, from the moment the report comes in to the measures Twitter takes in dealing with the situation.

The report updates will be visible in the Notifications tab on Twitter.com and the Twitter iOS app. The company admitted that the process towards a more widespread sense of safety on the social network isn't easy, and referenced the mistakes it's made in the past, like when it decided to turn off notifications every time users are added to a list. At the time, users pointed out how oddly anti-safe that move was, which placed them even more in the dark about who was interacting with their Twitter profile.

We’re learning a lot as we continue our work to make Twitter safer – not just from the changes we ship but also from the mistakes we make, and of course, from feedback you share.

Online safety has become a big concern for many social networks. Besides Twitter, Instagram has updated its app to let users moderate keywords that appear in the comments of their posts, as well as turn comments off completely. Facebook today also announced a suite of new suicide prevention tools aimed at leveraging AI systems and contacting at-risk users based on their actions within comments, posts, and Facebook Live videos.

Today's updates follow safety tweaks introduced by Twitter in February where it updated how users report abusive Tweets, prevented the creation of new abusive accounts, created safer search results, collapsed "low-quality" Tweets in conversations, and reduced notifications from conversations initiated by blocked or muted users. The new updates will be rolling out globally in the coming days and weeks.

Note: Due to the political nature of the discussion regarding this topic, the discussion thread is located in our Politics, Religion, Social Issues forum. All forum members and site visitors are welcome to read and follow the thread, but posting is limited to forum members with at least 100 posts.

Top Rated Comments

To everyone.

Apple's Tim Cook happens to be The biggest Hypocrite of all thou !!

Tim opens Apple stores in Middle East countries who support Sharia Law that Calls for the Killing of Gays and subversiveness of woman !!

Then preaches about tolerance and aceptiveness to the rest of us.

God I Miss SJ !!