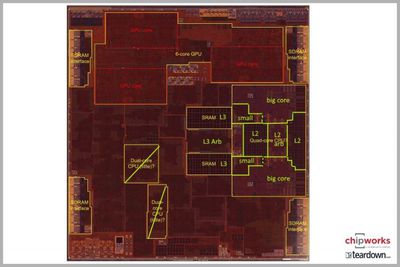

In the recent leak of information from Apple, a device tree shared by Steven Troughton-Smith and containing information specific to the iPhone X was used to glean CPU code names, presence of an OLED display, and information on many other things. Contained within that information were also specific details regarding the architecture behind Apple's new CPU cores, dubbed "Mistral" and "Monsoon." From this, we know that the A11 contains four Mistral cores and two Monsoon cores, and it's worth taking a technical look at what Apple might be up to with this new chip.

While the two Monsoon cores are clear follow-ons to the two large "Hurricane" cores in the A10, the Mistral cores double the small core count of two "Zephyr" cores in the A10.

Annotated die shots ultimately revealed that the small Zephyr cores appeared to be embedded within the larger Hurricane cores, taking advantage of their geographic location by sharing memory structure with the Hurricane cores.

The Mistral cores appear to be a departure from the above scheme, at the very least in that they have doubled in count. Specific references in the device tree are also made to memory hierarchy, suggesting that they contain independent L2 caches, meaning the Mistral cores could be more independent than their A10 ancestors.

This independence is underscored by the fact that the Mistral cores share a common "cluster-id" property, while the Monsoon cores share a distinct cluster-id of their own. Immediate comparisons were drawn to ARM's big.LITTLE heterogeneous CPU core scheme with the A10, and this seems to be going further down that path with distinct operating states for each cluster of cores. However, those leveraged shared resources in the A10 were to a certain benefit, namely die space and power consumption. The cores becoming more independent is more like a traditional big.LITTLE approach, which also entails more overhead.

This all may be an oversimplification, of course. After all, we know that each of these CPU cores is independently addressable, meaning that nothing revealed so far indicates an active Mistral or Monsoon core (or cluster) precludes the other CPU type from also being active, opening the door for mixed processor scenarios. Apple could have decided to spend effort, either in hardware, compilers, or both, to segregate instructions by complexity and ultimately forward them to the core that would execute them mostly efficiently.

Tackling problems in this manner would be another example in a long list of Apple's attempts to improve instruction execution efficiency through microarchitecture enhancements.

Any architectural changes ultimately circle back to improvements in some way. If Apple is making a change that includes doubling the amount of lower power cores, it seems inevitable it's ultimately spending more die space to do so, particularly if they have their own cache structures from L2 and down.

Yet, as pointed out by AnandTech editor Ian Cutress, ARM has begun allowing for configurable cache sizes for its offering of cores. In this specific case, a non-existent L2 cache is a valid configuration, meaning the increase in die space may not be as much as it initially seems with the small core count growth.

It's important to remember that Apple is not bound to these ARM conventions, but they are an indication of where the industry is headed. It's also important to remember that the shared L3 cache is always sitting above all of the cores, along with the GPU and image signal processor. Ultimately, these architectural changes likely boil down to a performance per watt increase, instructions per clock cycle increase, or perhaps both. Given that the small tasks a Mistral core might be activated for would likely not expose the parallelism needed for all four cores, it seem some interesting usage scenarios are a strong likelihood with Apple's A11 SoC.

To give the mixed-core ensemble of the A11 context, modern CPUs aggressively manage performance and power consumption by dynamically changing clock speeds, processor voltages, and even disabling entire CPU cores by gating clocks and powers to these cores. There are numerous references to all of these concepts in the software, in addition to several references of dynamic CPU and core control, as well as instructions per clock cycle, memory throughput thresholds, power thresholds, and even hysteresis to keep the cores from spinning up and down as the performance profile changes. No doubt many of these properties existed in the A10 as well, but the fact that Apple is increasing small core count shows Apple believes there's more benefit to be had here.

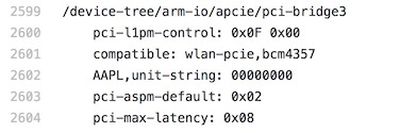

There are more details contained than just the CPU and OLED display, however. The software specifically calls out Broadcom's BCM4357 as the Wi-Fi module. This is curious because the BCM4357 is actually a very old Wi-Fi chipset. It seems likely that Apple truncated the trailing 0 from the BCM43570, which fits the 802.11ac profile of the iPhone 7 (and thus, not an upgrade). However, Broadcom does have a BCM4375 chip on the horizon which supports the forthcoming 802.11ax standard. Unless the keynote specifically addresses the Wi-Fi speeds, we may not immediately get clarification here, given the Wi-Fi module is often embedded in a larger module, often by component integrator Murata.

Moving over to the display side, the peak brightness in nits property seems to be referenced to a full scale value, rather than an actual decimal nits value, unfortunately. This could have given insight into whether Apple sought to pursue any of the existing HDR standards on the market, which often require a peak brightness over 1000 nits.

In the audio realm, the CS35L26 reference confirms another Cirrus Logic win for the top and bottom speakers, and the CS42L75 is an undocumented audio codec. Finally, for pure trivia, there's a reference to a 'sochot' property that curiously references the A6X chip identifier. It also contains an 'N41' reference in the baseband section, which refers to an iPhone 5 codename that introduced LTE to the iPhone families. These may, however, simply be references to old devices when features or properties were first introduced.

Apple will undoubtedly reveal some details on the new A11 chip and other internal upgrades for the new iPhones at its event that's just a few hours away now, but other information will have to wait until teardown firms can get their hands on the devices and have a closer look at what's inside.