Apple today debuted a new blog called the "Apple Machine Learning Journal," with a welcome message for readers and an in-depth look at the blog's first topic: "Improving the Realism of Synthetic Images." Apple describes the Machine Learning Journal as a place where users can read posts written by the company's engineers, related to all of the work and progress they've made for technologies in Apple's products.

In the welcome message, Apple encourages those interested in machine learning to contact the company at an email address for its new blog, machine-learning@apple.com.

Welcome to the Apple Machine Learning Journal. Here, you can read posts written by Apple engineers about their work using machine learning technologies to help build innovative products for millions of people around the world. If you’re a machine learning researcher or student, an engineer or developer, we’d love to hear your questions and feedback. Write us at machine-learning@apple.com

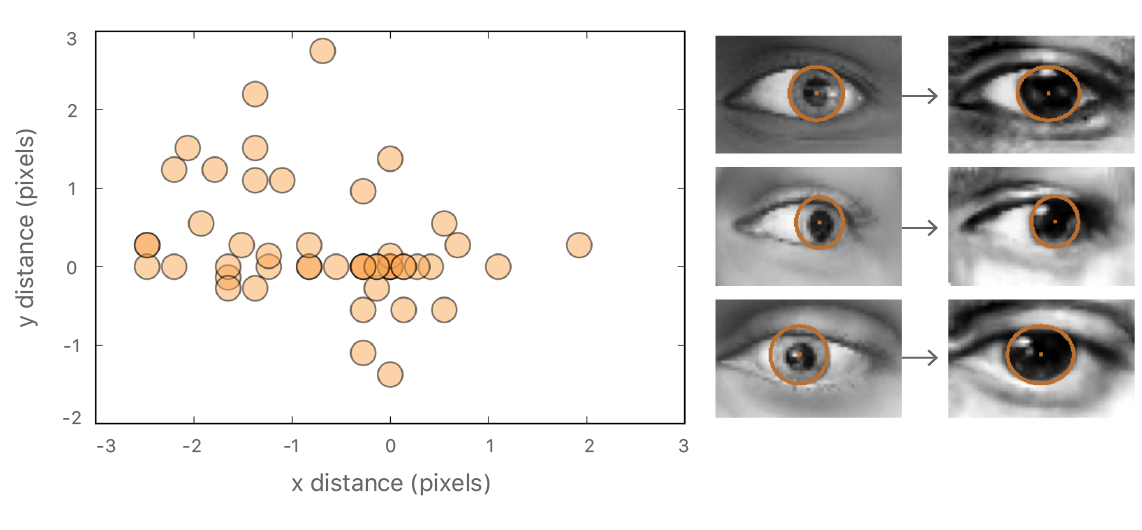

In the first post -- described as Vol. 1, Issue 1 -- Apple's engineers delve into machine learning related to neural nets that can create a program to intelligently refine synthetic images in order to make them more realistic. Using synthetic images reduces cost, Apple's engineers pointed out, but "may not be realistic enough" and could result in "poor generalization" on real test images. Because of this, Apple set out to find a way to enhance synthetic images using machine learning.

Most successful examples of neural nets today are trained with supervision. However, to achieve high accuracy, the training sets need to be large, diverse, and accurately annotated, which is costly. An alternative to labelling huge amounts of data is to use synthetic images from a simulator. This is cheap as there is no labeling cost, but the synthetic images may not be realistic enough, resulting in poor generalization on real test images. To help close this performance gap, we’ve developed a method for refining synthetic images to make them look more realistic. We show that training models on these refined images leads to significant improvements in accuracy on various machine learning tasks.

In December 2016, Apple's artificial intelligence team released its first research paper, which had the same focus on advanced image recognition as the first volume of the Apple Machine Learning Journal does today.

The new blog represents Apple's latest step in its progress surrounding AI and machine learning. During an AI conference in Barcelona last year, the company's head of machine learning Russ Salakhutdinov provided a peek behind the scenes of some of Apple's initiatives in these fields, including health and vital signs, volumetric detection of LiDAR, prediction with structured outputs, image processing and colorization, intelligent assistant and language modeling, and activity recognition, all of which could be potential subjects for research papers and blog posts in the future.

Check out the full first post in the Apple Machine Learning Journal right here.