Apple has been granted an augmented reality navigation patent stemming from its acquisition of AR startup Flyby Media earlier this year (via AppleInsider).

The patent was published today by the U.S. Patent and Trademark Office under the title "Visual-based inertial navigation", and describes a system that allows a consumer device to position itself in three-dimensional space using data from cameras and sensors.

The system combines images from an onboard camera with measurements gleaned from a gyroscope and accelerometers as well as other sensors, to build a picture of the device's real-time position in physical space.

The patent notes that visual-based inertial navigation systems can achieve positional awareness down to the centimeter scale without the need for GPS or cellular network signals. However, the technology is unsuitable for implementation in typical mobile devices because of the processing demands involved in variable real-time location tracking.

To overcome the limitation, Apple's invention uses something called a sliding window inverse filter (SWF) that minimizes computational load by using predictive coding to map the orientation of objects relative to the device.

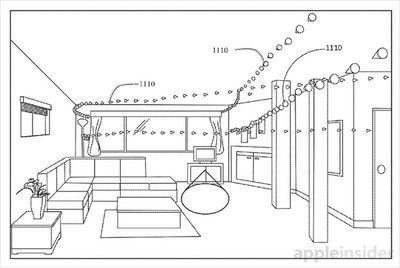

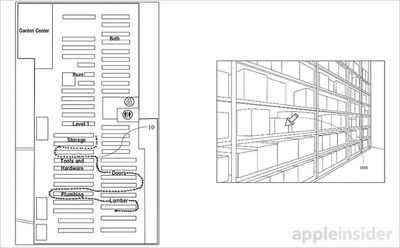

The system could be used in a navigational AR device that overlays an output image with location-based information. One scenario describes how the technology could be used to pinpoint items in a retail store as a user walks among the aisles. Another describes the use of depth sensors to generate a 3D map of a given environment.

Whether or not Apple uses the patent in an upcoming product is obviously unknown at this time, but the company has been relatively open about its interest in innovating in the virtual reality and AR space. Apple is said to have a large team experimenting with headsets and other technologies and is believed to have been working in the area since at least early 2015.

The patent was filed in 2013 and credits former Flyby Media employees Alex Flint, Oleg Naroditsky, Christopher P. Broaddus, Andriy Grygorenko and Oriel Bergig, as well as University of Michigan professor Stergios Roumeliotis, as its inventors.

Top Rated Comments

Microsoft have gone on record saying that they will be launching Hololens for Windows 10 next year and it will be integrated into the Windows Desktop or some new 3d AR version of it.

It makes sense for Apple to be developing some Operating System level AR functionality where you can run multiple AR applications at the same time. So you can be running a virtual TV on your wall while you play a virtual game on the table in front of you and look out the window and see the names of stars and planets in the sky provided by your astronomy app for example.

For this is be viable Apple need to update all the Mac range with GPUs that are powerful enough to support AR.

If Apple aren't working on this they will miss the next big thing. This will hit before any Apple Car. This could be the next Mac, iPod, iPhone, iPad type product. iSee?