Snapchat is facing a lawsuit over claims that the app is guilty of routinely serving sexually explicit content to minors without warning (via The Verge).

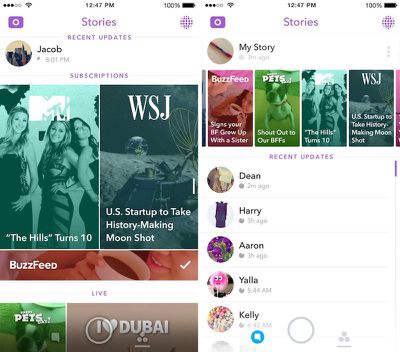

The lawsuit was filed this week by a 14-year-old boy and his mother in a district court in California. The plaintiffs argue that offensive content was shown in Snapchat's Discover page, where non-subscribed publications are delivered to user feeds.

The lawsuit says that by routinely including sexually explicit content without providing adequate warnings, the app's Discover feature is in violation of the Communications Decency Act:

Millions of parents in the United States today are unaware that Snapchat is curating and publishing this profoundly sexual and offensive content to their children. By engaging in such conduct directed at minors, and making it simple and easy for users to 'snap' each other's content from Snapchat Discover, Snapchat is reinforcing the use of its service to facilitate problematic communications, such as 'sexting,' between minors. Snapchat has placed profit from monetizing Snapchat Discover over the safety of children.

The lawsuit, which is seeking class-action status, seeks civil penalties and a requirement that Snapchat includes an in-app warning about sexual content.

Publishers regularly create specialized content for the platform and Snapchat receives advertising revenue from these partners in return. Users can subscribe to specific publisher channels, but the Discover page brings exposure to publishers they have not subscribed to.

Snapchat claims its partners have editorial independence, but according to The Verge (also a content provider for Snapchat) the company reportedly exercises a heavy hand in guiding the look and feel of published stories.

Snapchat is rated in the App Store as appropriate for children ages 12 and over, noting that it may contain infrequent or mild sexual content, nudity, suggestive themes, profanity, and references to drugs and alcohol. That contrasts with Snapchat's terms of service, which restrict use to children 13 and older.

You can read the lawsuit here.

Top Rated Comments

So I'm blaming the older generation :p

It's a shame there aren't many safe harbors for kids anymore. But it is what it is. And so my husband and I diligently sift through the kids' communications. The tricky part is dealing with the fact we can't control what a kid sees and hears at a friend's house if the friend's parents aren't as diligent and tech savvy. So we have to do a lot of talking about our expectations and what we deem appropriate or inappropriate and downright stupid and degrading and self destructive online activity and how we expect our child to react to peer pressure. And we have to do so in a way that doesn't scare a kid off from confiding in us. My daughter sees me as a "cool mom" so I'm let in on a lot. For now. My position is always as precarious as that of a cop working with a mob snitch and requires as much finesse. :eek::confused:

Despite all that effort, we'd have to be incredibly naive and stupid to think some stuff isn't getting past us once in awhile. We were kids once, too...