The United States Patent and Trademark Office today published a patent filed by Apple last March, which details how inline proximity sensors could be used in tandem with a touchscreen display to detect non-contact hover gestures (via AppleInsider).

The patent, titled "Proximity and multi-touch sensor detection and demodulation", reveals how photodiodes or other proximity hardware work in parallel with traditional multitouch displays to extend user interaction beyond the screen surface.

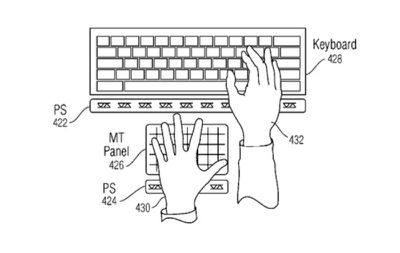

One embodiment of the patent describes a capacitive sensing element, using a range of proximity sensors and an LCD display, that would let users deploy gestures above a traditional keyboard, resulting in a "virtual keyboard".

With multiple proximity arrays deployed on every touch sensor or pixel of the panel, the system can detect a finger, palm or other object hovering over the display surface. The detected motion is then translated to a GUI by which users can "push" virtual buttons, trigger functions without physical touch, toggle power to devices and more.

Various configurations of the technology are outlined in the patent, including one which describes a MacBook featuring assistive hover-sensing displays that augment typing and trackpad input.

As with any filed patent, the technology is unlikely to appear in any product soon, if at all, especially given that Apple only recently introduced 3D Touch support and is still actively encouraging app developers to make more use of the feature.

Apple has expressed interest in non-contact user interfacing and motion control for some time. In 2013, for example, the company acquired PrimeSense, the firm responsible for the original technology used by Microsoft for its Kinect platform.

Top Rated Comments