More than a decade ago, Walter Isaacson began working on a book to highlight the history of computers and the Internet, but the project was sidelined in early 2009 when he took on the task of writing Steve Jobs' authorized biography. That book, which debuted just weeks after Jobs' death in October 2011, topped best seller charts and revealed a number of interesting details about Jobs and Apple.

More than a decade ago, Walter Isaacson began working on a book to highlight the history of computers and the Internet, but the project was sidelined in early 2009 when he took on the task of writing Steve Jobs' authorized biography. That book, which debuted just weeks after Jobs' death in October 2011, topped best seller charts and revealed a number of interesting details about Jobs and Apple.

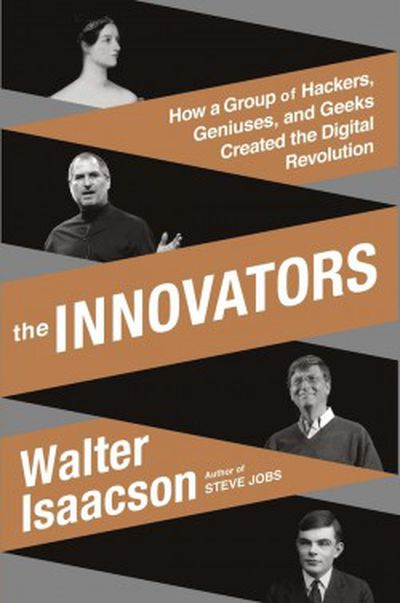

Following the publication of Steve Jobs, Isaacson returned to his earlier project of documenting the history of computing, and that work debuts tomorrow as The Innovators: How a Group of Hackers, Geniuses, and Geeks Created the Digital Revolution. While Apple and Jobs play relatively minor roles in the book, overall it offers an interesting look at how computers and the Internet developed into what they are today.

Isaacson breaks his book into nearly a dozen different sections, highlighting a number of advancements along the way. It begins with Ada Lovelace and Charles Babbage outlining their thoughts on a mechanical "Analytical Engine" in the 1830s and 1840s before jumping ahead nearly 100 years to Vannevar Bush and Alan Turing and their visions for the earliest computers that would follow soon after. Further sections address advances in programming, transistors, microchips, video games, and the early Internet before broaching the topics of the modern personal computer and the World Wide Web.

Throughout the book, Isaacson focuses on the importance of teamwork rather than individual genius in the development of computers, frequently involving contrasting but complementary personalities of visionaries, technical experts, and managers. Popular examples include Steve Jobs and Steve Wozniak at Apple, or Bob Noyce, Gordon Moore, and Andy Grove at Intel, but the observation extends further as time and time again teams have been responsible for many of the biggest innovations.

Innovation comes from teams more often than from the lightbulb moments of lone geniuses. This was true of every era of creative ferment. [...] But to an even greater extent, this has been true of the digital age. As brilliant as the many inventors of the Internet and computer were, they achieved most of their advances through teamwork.

Isaacson also emphasizes the importance of building on previous discoveries, including collaboration both within and between generations of scientists. A number of characters in the book appear at multiple stages, often first as innovators themselves and later helping to foster discoveries by the next generation.

Other observations include the various roles of government, academia, and business in the development of computing and how they frequently came together, particularly in the early days, to lead advancements. Isaacson also uses several cases to argue that innovation works best when different business models compete against each other, particularly in software development as with Apple's integrated systems vying with Microsoft's unbundled model while the free and open-source approach maintained its position in the market.

Each model had its advantages, each had its incentives for creativity, and each had its prophets and disciples. But the approach that worked best was having all three models coexisting, along with various combinations of open and closed, bundled and unbundled, proprietary and free. Windows and Mac, UNIX and Linux, iOS and Android: a variety of approaches competed over the decades, spurring each other on -- and providing a check against any one model becoming so dominant that it stifled innovation.

Packing the entire history of computing into 500 pages leaves some topics feeling brief or left out altogether, but Isaacson's book gives an interesting overview for those who may not be familiar with the technical advances stretching back decades that have given rise to the current state of the art. Focusing more on the people and relationships than the technical details, it offers some insight into how breakthroughs have been made and how some innovators have gained fame and fortune while others slipped into near obscurity.