Researchers at Microsoft claim to have created a new speech recognition technology that transcribes conversational speech as well as a human does (via The Verge).

The system's word error rate is reportedly 5.9 percent, which is about equal to professional transcribers asked to work on the same recordings, according to Microsoft.

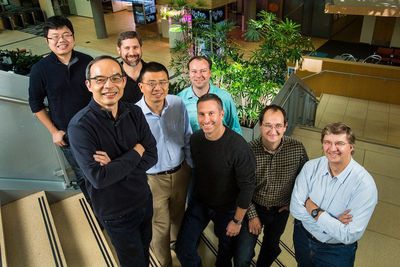

"We've reached human parity," said chief speech scientist Xuedong Huang in a statement, calling the milestone "an historic achievement".

To reach the milestone, the team used Microsoft’s Computational Network Toolkit, a homegrown system for deep learning that the research team has made available on GitHub via an open source license. The system uses neural network technology that groups similar words together, which allows the models to generalize efficiently from word to word.

The neural networks draw on large amounts of data called training sets to teach the transcribing computers to recognize syntactical patterns in the sounds. Microsoft plans to use the technology in Cortana, its personal voice assistant in Windows and Xbox One, as well as in speech-to-text transcription software.

But the technology still has a long way to go before it can claim to master meaning (semantics) and contextual awareness - key characteristics of everyday language use that need to be grasped for Siri-like personal assistants to process requests and act upon them in a helpful way.

"We are moving away from a world where people must understand computers to a world in which computers must understand us," said Harry Shum, who heads the Microsoft AI Research group. However it will be a long time before computers can understand the real meaning of what's being said, he cautioned. "True artificial intelligence is still on the distant horizon."

Top Rated Comments

New paragraph.

The Microsoft experiment, comma, allegedly, comma, transcribes ordinary recorded speech and dialog without any additional effort on the part of the speaker, period.

....and you can stop reading here. As both an Apple and MS customer, I never believe a word MS says on future products until it hits the market. And then it is usually 1/2 as good with 1/3 of the features as the promises.

I'm watching the Voyager and Enterprise series again now on Netflix. Never get bored of them. :)

[doublepost=1476889293][/doublepost]Hard to figure out Apple these days. They had very accurate speech recognition and speech synthesis (comparatively) back in the early 80s when the Mac first came out. Remember the Talking Moose?