Nearly All Mobile Device Makers Cheat on Benchmarks, Except Apple and Motorola

Following Tuesday's report that Samsung artificially inflates its benchmarking scores, well-respected hardware review site AnandTech has published evidence suggesting nearly all mobile manufacturers, with the exception of Apple and Motorola, use CPU/GPU optimizations to game benchmark tests.

Samsung and other OEMs use a variety of methods to enhance device performance when a benchmark is detected. For example, with its Galaxy S 4 Samsung raised its thermal limits (and max GPU frequency) to get an edge on certain benchmarks and also raised its CPU voltage/frequency to its highest state when a benchmark was sensed, a tactic engaged by multiple manufacturers like LG, HTC, and ASUS as well.

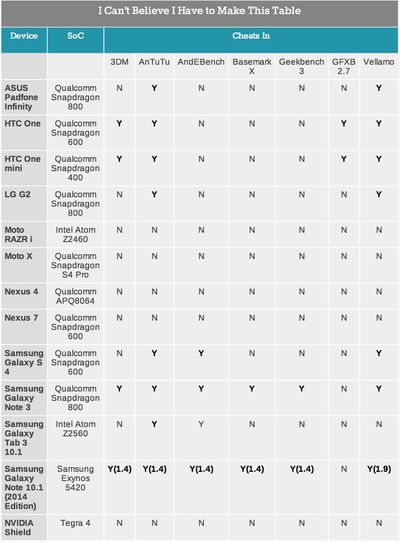

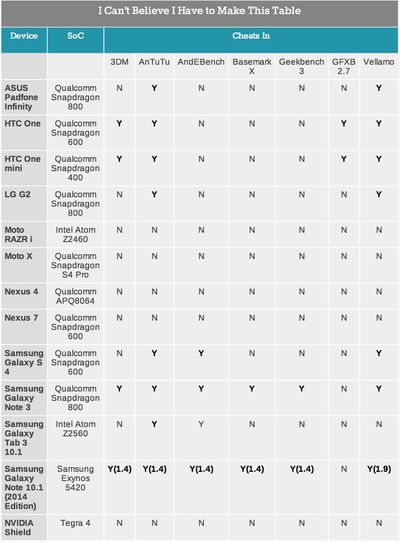

In the table below, Anandtech highlights devices that detect benchmarks and immediately respond with max CPU frequency.

With the exception of Apple and Motorola, literally every single OEM we’ve worked with ships (or has shipped) at least one device that runs this silly CPU optimization. It’s possible that older Motorola devices might’ve done the same thing, but none of the newer devices we have on hand exhibited the behavior. It’s a systemic problem that seems to have surfaced over the last two years, and one that extends far beyond Samsung.

AnandTech notes that it’s a continual "cat and mouse" game discovering which devices have optimized for which benchmarks, because targeted benchmarks must be avoided.

The only realistic solution is to continue to evolve the suite ahead of those optimizing for it. The more attention you draw to certain benchmarks, the more likely they are to be gamed. We constantly play this game of cat and mouse on the PC side, it's just more frustrating in mobile since there aren’t many good benchmarks to begin with. […]

There's no single solution here, but rather a multi-faceted approach to make sure we’re ahead of the curve. We need to continue to rev our test suite to stay ahead of any aggressive OEM optimizations, we need to petition the OEMs to stop this madness, we need to work with the benchmark vendors to detect and disable optimizations as they happen and avoid benchmarks that are easily gamed.

Despite all of the effort that OEMs put into benchmark optimizations, the gains are negligible. The impact on CPU tests revealed a 0 to 5 percent performance increase, and a less than 10 percent increase on GPU benchmarks.

Popular Stories

A new Apple TV is expected to be released later this year, and a handful of new features and changes have been rumored for the device.

Below, we recap what to expect from the next Apple TV, according to rumors.

Rumors

Faster Wi-Fi Support

The next Apple TV will be equipped with Apple's own combined Wi-Fi and Bluetooth chip, according to Bloomberg's Mark Gurman. He said the chip supports ...

Apple will launch its new iPhone 17 series in two months, and the iPhone 17 Pro models are expected to get a new design for the rear casing and the camera area. But more significant changes to the lineup are not expected until next year, when the iPhone 18 models arrive.

If you're thinking of trading in your iPhone for this year's latest, consider the following features rumored to be coming...

Apple's next-generation iPhone 17 Pro and iPhone 17 Pro Max are only two months away, and there are plenty of rumors about the devices.

Below, we recap key changes rumored for the iPhone 17 Pro models.

Latest Rumors

These rumors surfaced in June and July:A redesigned Dynamic Island: It has been rumored that all iPhone 17 models will have a redesigned Dynamic Island interface — it might ...

The long wait for an Apple Watch Ultra 3 is nearly over, and a handful of new features and changes have been rumored for the device.

Below, we recap what to expect from the Apple Watch Ultra 3:Satellite connectivity for sending and receiving text messages when Wi-Fi and cellular coverage is unavailable

5G support, up from LTE on the Apple Watch Ultra 2

Likely a wide-angle OLED display that ...

iPhone 17 Pro and iPhone 17 Pro Max models with displays made by BOE will be sold exclusively in China, according to a new report.

Last week, it emerged that Chinese display manufacturer BOE was aggressively ramping up its OLED production capacity for future iPhone models as part of a plan to recapture a major role in Apple's supply chain.

Now, tech news aggregator Jukan Choi reports...

The iOS 26 public beta release is quickly approaching, while developers have recently gotten their hands on a third round of betas that has seen Apple continue to tweak features, design, and functionality.

We're also continuing to hear rumors about the iPhone 17 lineup that is now just about right around the corner, while Apple's latest big-budget film appears to be taking off, so read on...