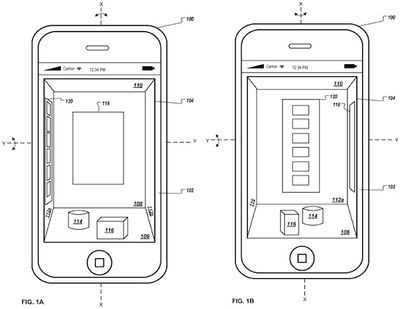

Patently Apple reports on a newly-published patent application from Apple describing the use of motion sensors to create a virtual three-dimensional interface for iOS devices. Utilizing concepts similar those found in a number of existing augmented reality apps, the general device interface could appear as a virtual room that could be navigated by changing the orientation of the device.

The invention covers a 3D display environment for mobile device that uses orientation data from one or more onboard sensors to automatically determine and display a perspective projection of the 3D display environment based on the orientation data without the user physically interacting with (e.g., touching) the display.

Apple describes the use of gyroscope sensors that would allow the "camera view" of the virtual room to rotate as the user rotates their device. Apple also mentions that sensors could be used to detect gestures above the surface of the device's display in order to allow for natural 3D manipulation of the user interface environment.

Finally, Apple's patent application discusses a "snap to" feature that would allow users to easily transition between various "walls" within the virtual room.

In another patent application filed in mid-2007, Apple discussed a similar notion of multi-dimensional desktops in Mac OS X, showing a virtual room with various groups of icons on the different walls of the room. Apple followed that up with another patent application looking at hyper-reality displays that would allow for orientation- and motion-based manipulation of 3D objects.